Difference between revisions of "Projects:Sketchy recognition"

(→Working sketches) |

(→Working sketches) |

||

| Line 89: | Line 89: | ||

* binoculars http://vandal.ist/diversions2019/mim/sketchrecog.html#3206 | * binoculars http://vandal.ist/diversions2019/mim/sketchrecog.html#3206 | ||

* piano - laptop http://vandal.ist/diversions2019/mim/sketchrecog.html#2012.072 | * piano - laptop http://vandal.ist/diversions2019/mim/sketchrecog.html#2012.072 | ||

| + | * Cell phone http://vandal.ist/diversions2019/mim/sketchrecog.html#3873-02 | ||

=== Rough notes (not for publication ;) === | === Rough notes (not for publication ;) === | ||

Revision as of 15:13, 30 August 2019

Sketchy Recognition (working title) (formerly Different orders co-exist)

Collections: Koninklijke Musea voor Kunst en Geschiedenis/MIM

Contents

Description

NEEDS UPDATING

Een van de eerste stappen om een reeks documenten te begrijpen, is door ze te ordenen. Naast het gevoel van controle dat het je geeft, vertelt elke ordening een ander verhaal over een archief. Een opsomming van alle elementen door middel van ‘unique id’ bijvoorbeeld is gebaseerd op de interne index die de database genereert om de informatie bij te houden. Maar die ordening vertelt ook over de menselijke arbeid die het "systeem voedt". De elementen werden één voor één geïntroduceerd, door één of meer mensen, op het moment dat het materiaal beschikbaar was. En de opeenvolging van 'unieke ids' weerspiegelt de opeenvolging van inserts in de database, en laat daarmee iets zien van de relaties tussen mensen, informatie en machines. Different orders co-exist stelt dat we geen directe toegang hebben tot een archief en de bijbehorende documenten, maar enkel via een dialoog met veelal technologische en algoritmische gesprekspartners.

Installation

- (single-board, ie Raspberry Pi) Computer

- Camera

- Drawing surface

- (mini) projector and/or adjacent display

Interactive installation where participants / visitors can draw forms which are interpreted live by a closed-circuit computer vision (CCCV) system. Based on a "ready-made" model trained on sketches from one of 250 pre-determined categories. The model is also used to make connections between the visitors drawing and items from the collection of the Musical Intrument Museum (MIM).

bio

Nicolas Malevé is beeldend kunstenaar, computer programmeur en data-activist. Op dit moment woont en werkt hij tussen Brussel en Londen aan een onderzoek naar hoe en waarom machinale normen over ‘kijken’ in Computer Vision algoritmes worden geïmplementeerd.

Michael Murtaugh doet onderzoek naar community databases, interactieve documentaire en tools voor nieuwe vormen van online lezen en schrijven. Hij is als docent betrokken bij het Experimental Publishing Traject van de Media Design Master aan het Piet Zwart Instituut in Rotterdam.

Notice

Bread, Noge, Kangaroo or Teddy Bear?

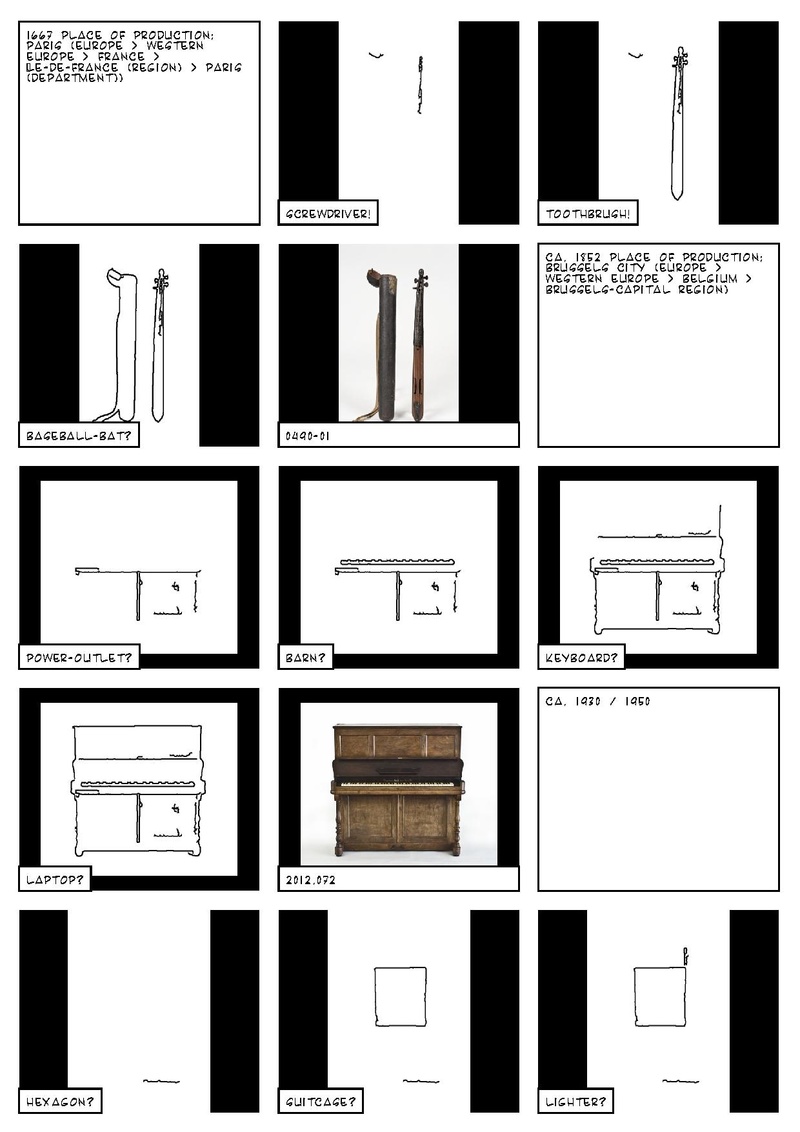

A photograph from the collection of the Museum of Musical Instrument is processed by a contour detector algorithm. The algorithm draws the lines it found on the image sequentially. While it is tracing the contours, another algorithm, a sketch detector, tries to guess what is being drawn. Is it bread? A kangaroo? It is a teddy bear.

Sketch Recognition (working title) is an attempt to provoke a dialogue with, and between, algorithms, visitors and museum collections.

Cast:

- Musical instruments: MIM collection, Brussels.

- Line detector: The Hough algorithm in the OpenCV toolbox, originally developed to analyse bubble chamber photographs.

- Sketch recognizer: an algorithm based on the research of Eitz, Hays and Alexa (2012), and the code and models by Jean-Baptiste Alayrac.

- Data: from the hands of the many volunteers who contributed to Google's Quick, Draw! Dataset.

- Special sauce, bugs and fixes: Michael and Nicolas

Sources

- MIM/Carmentis Saskia Willaerts

- Contours / Algorithm (what is the algorithm in use in opencv?)

- Sketch recognition database / programmer of model -- Using Jean-Baptiste Alayrac's pre-trained model

(Re)sources

- Code for this project

- You were asked to draw an angel

- Assisted drawing ... original blog post

- How Do Humans Sketch Objects?, Eitz, Hays, Alexa (2012)

Humans have used sketching to depict our visual world since prehistoric times. Even today, sketching is possibly the only rendering technique readily available to all humans. This paper is the first large scale exploration of human sketches. We analyze the distribution of non-expert sketches of everyday objects such as 'teapot' or 'car'. We ask humans to sketch objects of a given category and gather 20,000 unique sketches evenly distributed over 250 object categories. With this dataset we perform a perceptual study and find that humans can correctly identify the object category of a sketch 73% of the time. We compare human performance against computational recognition methods. We develop a bag-of-features sketch representation and use multi-class support vector machines, trained on our sketch dataset, to classify sketches. The resulting recognition method is able to identify unknown sketches with 56% accuracy (chance is 0.4%). Based on the computational model, we demonstrate an interactive sketch recognition system. We release the complete crowd-sourced dataset of sketches to the community.

Code

- C/C++ implementation for the paper "How do Human Sketch Objects?" https://github.com/GTmac/Classify-Human-Sketches

- Python/Jupyter https://github.com/ajwadjaved/Sketch-Recognizer

- Jean-Baptist Alayrac's working python code (what we ended up using)

Working sketches

some "best of" links:

- Teddy bear ... http://vandal.ist/diversions2019/mim/sketchrecog.html#4306-02

- Ice cream cone ... http://vandal.ist/diversions2019/mim/sketchrecog.html#4290

- A panda encircled by a guitar ... http://vandal.ist/diversions2019/mim/sketchrecog.html#1981.039-01

- screwdriver, toothbrush, baseball bat ... http://vandal.ist/diversions2019/mim/sketchrecog.html#0490-01

- binoculars http://vandal.ist/diversions2019/mim/sketchrecog.html#3206

- piano - laptop http://vandal.ist/diversions2019/mim/sketchrecog.html#2012.072

- Cell phone http://vandal.ist/diversions2019/mim/sketchrecog.html#3873-02

Rough notes (not for publication ;)

cf Saskia's story of misnaming an instrument. (The end of which was that the African museum contacted wanted not that the instrument be returned, but that the name be updated to reflect the fact that the name incorrectly referred to a larger class of instruments, and not the particular instrument in question)

How explicit do we need to be with our intentionality. Danger: Flatten the potential? Maybe keep it simple / straightforward

Meta data as interstitial frames introducing the sequences of images + sketch predictions.

Algorithms reading algorithms...